Summary first: If you feel the need to cloak, just do it within reason. Don’t cloak because you can, but because it’s technically the most elegant procedure to accomplish a Web development task. Bing and Google can’t detect your (in no way deceptive) intend algorithmically. Don’t spam away, though, because you might leave trails besides cloaking alone, if you aren’t good enough at spamming search engines. Keep your users interests in mind. Don’t comply to search engine guidelines as set in stone, but to a reasonable level, for example when those force you to comply to Web standards that make more sense than the fancy idea you’ve developed on internationalization, based on detecting browser language settings or so.

This pamphlet is an opinion piece. The above said should be considered best practice, even by search engines. Of course it’s not, because search engines can and do fail, just like a webmaster who takes my statement “go cloak away if it makes sense” as technical advice and gets his search engine visibility tanked the hard way.

This pamphlet is an opinion piece. The above said should be considered best practice, even by search engines. Of course it’s not, because search engines can and do fail, just like a webmaster who takes my statement “go cloak away if it makes sense” as technical advice and gets his search engine visibility tanked the hard way.

WTF is cloaking?

Cloaking, also known as IP delivery, means delivering content tailored for specific users who are identified primarily by their IP addresses, but also by user agent (browser, crawler, screen reader…) names, and whatnot. Here’s a simple demonstration of this technique. The content of the next paragraph differs depending on the user requesting this page. Googlebot, Googlers, as well as Matt Cutts at work, will read a personalized message:

Dear visitor, thanks for your visit from 207.241.234.62 (crawl503.us.archive.org).

You surely can imagine that cloaking opens a can of worms lots of opportunities to enhance a user’s surfing experience, besides “stalking” particular users like Google’s head of WebSpam.

Why do search engines dislike cloaking?

Apparently they don’t. They use IP delivery themselves. When you’re traveling in europe, you’ll get hints like “go to Google.fr” or “go to Google.at” all the time. That’s google.com checking where you are, trying to lure you into their regional services.

More seriously, there’s a so-called “dark side of cloaking”. Say you’re a seasoned Internet marketer, then you could show Googlebot an educational page with compelling content under an URI like “/games/poker” with an X-Robots-Tag HTTP header telling “noarchive”, whilst surfers (search engine users) supplying an HTTP_REFERER and not coming from employee.google.com get redirected to poker dot com (simplified example).

That’s hard to detect for Google’s WebSpam team. Because they don’t do evil themselves, they can’t officially operate sneaky bots that use for example AOL as their ISP to compare your spider fodder to pages/redirects served to actual users.

Bing sends out spam bots that request your pages “as a surfer” in order to discover deceptive cloaking. Of course those bots can be identified, so professional spammers serve them their spider fodder. Besides burning the bandwidth of non-cloaking sites, Bing doesn’t accomplish anything useful in terms of search quality.

Because search engines can’t detect cloaking properly, not to speak of a cloaking webmaster’s intentions, they’ve launched webmaster guidelines (FUD) that forbid cloaking at all. All Google/Bing reps tell you that cloaking is an evil black hat tactic that will get your site penalized or even banned. By the way, the same goes for perfectly legit “hidden content” that’s invisible on page load, but viewable after a mouse click on a “learn more” widget/link or so.

Bullshit.

If your competitor makes creative use of IP delivery to enhance their visitors’ surfing experience, you can file a spam report for cloaking and Google/Bing will ban the site eventually. Just because cloaking can be used with deceptive intent. And yes, it works this way. See below.

Actually, those spam reports trigger a review by a human, so maybe your competitor gets away with it. But search engines also use spam reports to develop spam filters that penalize crawled pages totally automatted. Such filters can fail, and -trust me- they do fail often. Once you must optimize your content delivery for particular users or user groups yourself, such a filter could tank your very own stuff by accident. So don’t snitch on your competitors, because tomorrow they’ll return the favor.

Enforcing a “do not cloak” policy is evil

At least Google’s WebSpam team comes with cojones. They’ve even banned their very own help pages for “cloaking“, although those didn’t serve porn to minors searching for SpongeBob images with safe-search=on.

That’s overdrawn, because the help files of any Google product aren’t usable without a search facility. When I click “help” in any Google service like AdWords, I get either blank pages, and/or links within the help system are broken because the destination pages were deindexed for cloaking. Plain evil, and counter productive.

Just because Google’s help software doesn’t show ads and related links to Googlebot, those pages aren’t guilty of deceptive cloaking. Ms Googlebot won’t pull the plastic, so it makes no sense to serve her advertisements. Related links are context sensitive just like ads, so it makes no sense to persist them in Google’s crawling cache, or even in Google’s search index. Also, as a user I really don’t care whether Google has crawled the same heading I see on a help page or not, as long as I get directed to relevant content, that is a paragraph or more that answers my question.

When a search engine doesn’t deliver the very best search results intentionally, just because those pages violate an outdated and utterly useless policy that rules fraudulent tactics in a shape lastly used in the last century and doesn’t take into account how the Internet works today, I’m pissed.

Maybe that’s not bad at all when applied to Google products? Bullshit, again. The same happens to any other website that doesn’t fit Google’s weird idea of “serving the same content to users and crawlers”. I mean, as long as Google’s crawlers come from US IPs only, how can a US based webmaster serve the same content in German language to a user coming from Austria and Googlebot, both requesting a URI like “/shipping-costs?lang=de” that has to be different for each user because shipping a parcel to Germany costs $30.00 and a parcel of the same weight shipped to Vienna costs $40.00? Don’t tell me bothering a user with shipping fees for all regions in CH/AT/DE all on one page is a good idea, when I can reduce the information overflow to a tailored info of just one shipping fee that my user expects to see, followed by a link to a page that lists shipping costs for all european countries, or all countries where at least some folks might speak/understand German.

Back to Google’s ban of its very own help pages that hid AdSense code from Googlebot. Of course Google wants to see what surfers see in order to deliver relevant search results, and that might include advertisements. However, surrounding ads don’t necessarily obfuscate the page’s content. Ads served instead of content do. So when Google wants to detect ad laden thin pages, they need to become smarter. Penalizing pages that don’t show ads to search engine crawlers is a bad idea for a search engine, because not showing ads to crawlers is a good idea, not only bandwidth-wise, for a webmaster.

Managing this dichotomy is the search engine’s job. They shouldn’t expect webmasters to help them solving their very own problems (maintaining search quality). In fact, bothering webmasters with policies solely put because search engine algos are fallible and incapable is plain evil. The same applies to instruments like rel-nofollow (launched to help Google devaluing spammy links but backfiring enormously) or Google’s war on paid links (as if not each and every link on the whole Internet is paid/bartered for, somehow).

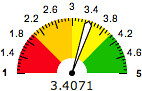

What do you think, should search engines ditch their way too restrictive “don’t cloak” policies? Click to vote:

Update 2010-07-06: Don’t miss out on Danny Sullivan’s “Google be fair!” appeal, posted today: Why Google Should Ban Its Own Help Pages — But Also Shouldn’t

Share/bookmark this: del.icio.us •

Google •

ma.gnolia •

Mixx •

Netscape •

reddit •

Sphinn •

Squidoo •

StumbleUpon •

Yahoo MyWeb

Subscribe to  Entries Entries  Comments Comments  All Comments All Comments

|

|

|

I’m perfectly satisfied with shopping search results ordered by relevancy and (link) popularity. I do not want Google to decide where I have to buy my stuff, just because an assclown treating his customers like shit got coverage in the NYT.

I’m perfectly satisfied with shopping search results ordered by relevancy and (link) popularity. I do not want Google to decide where I have to buy my stuff, just because an assclown treating his customers like shit got coverage in the NYT.

Of course localization is in place and working fine (you can change your current address in your Google Profile at any time by providing Checkout with another credit card).

Of course localization is in place and working fine (you can change your current address in your Google Profile at any time by providing Checkout with another credit card). Press the Search button.

Press the Search button. Now tell the almighty Google why your pathetic site deserves better rankings than the popular brands with deep pockets you’re competiting with on the Interwebs.

Now tell the almighty Google why your pathetic site deserves better rankings than the popular brands with deep pockets you’re competiting with on the Interwebs. I still can’t agree to the friggin’ “N” in SNAFU when it comes to URI shortening. Every time I’m

I still can’t agree to the friggin’ “N” in SNAFU when it comes to URI shortening. Every time I’m

This pamphlet is an opinion piece. The above said should be considered best practice, even by search engines. Of course it’s not, because search engines can and do fail, just like a webmaster who takes my statement “go cloak away if it makes sense” as technical advice and gets his search engine visibility tanked the hard way.

This pamphlet is an opinion piece. The above said should be considered best practice, even by search engines. Of course it’s not, because search engines can and do fail, just like a webmaster who takes my statement “go cloak away if it makes sense” as technical advice and gets his search engine visibility tanked the hard way.